Amazon Redshift

Amazon Redshift is a data warehouse product which forms part of the larger cloud-computing platform Amazon Web Services.

Amazon Redshift-Specific Features and Limitations

Skyvia has the following limitations for Amazon Redshift:

- Synchronization is not supported for Amazon Redshift.

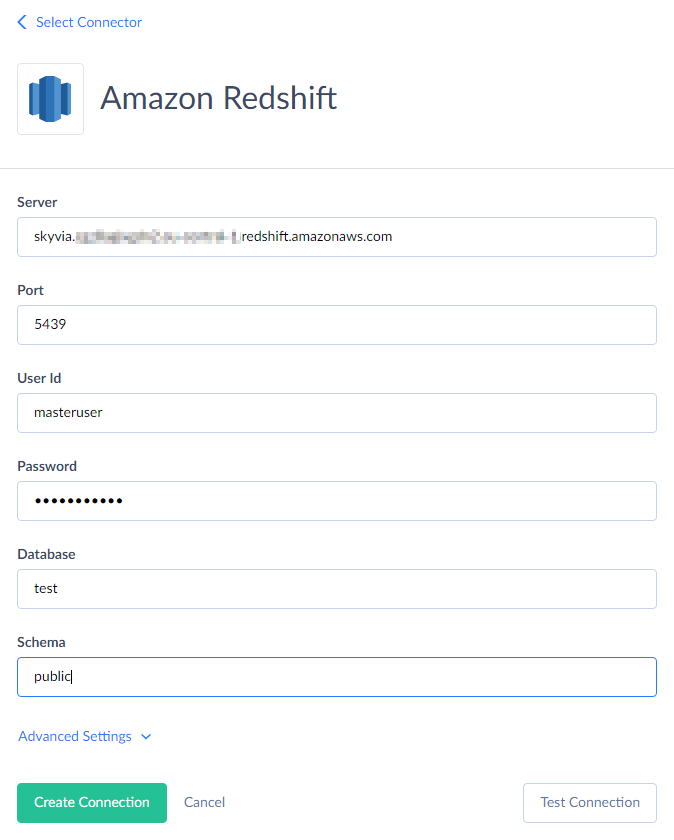

Amazon Redshift Connections

To connect to Redshift, you need to specify the server host name or IP address, the port the server is running on, the user name and password to log in with, and the database name. If you need to import data to Redshift via Skyvia or perform replication, you will need to specify additional advanced parameters described below.

You need to specify the following parameters for Amazon Redshift connection:

-

Name — connection name that will be used to identify the connection in the objects list and when selecting a connection for an integration.

-

Server — name or IP address of the Redshift host to connect to.

-

Port — Redshift connection port; default value is 5432.

-

User Id — the username used to log in.

-

Password — password to log in with.

-

Database — the name of the Redshift database to which you are connecting.

-

Schema — the name of the Redshift schema to which you are connecting.

To execute Import or Replication integrations via this connection, you must configure Advanced Settings and provide parameters for connecting to Amazon S3.

This is required because Skyvia uses Redshift’s data import from Amazon S3 for these operations. Specifically, Skyvia uploads data as CSV files to Amazon S3, instructs Redshift to import the data, and deletes the CSV files after the import is complete. Therefore, you must specify the S3 region to use and provide either AWS Security Token or AWS Access Key ID and AWS Secret Key. You may also optionally specify the S3 Bucket Name for file uploads. Below are descriptions of these parameters:

-

AWS Access Key ID — first part of your Amazon Web Services access key.

-

AWS Secret Key — second part of your Amazon Web Services access key. Read more about AWS access keys...

-

AWS Security Token — alternative to AWS Access Key ID and AWS Secret Key pair, Amazon Web Services Security token is a temporary limited-privilege credential.

-

S3 Region — AWS region, where your S3 storage is hosted.

-

S3 Bucket Name — here you may specify the name of your S3 bucket to temporarily load the file with imported or replicated data to. If you leave it empty, Skyvia will create a new bucket when importing or replicating data to Redshift and delete it after the operation is finished.

SSL Connections

To use an SSL connection, click Advanced Settings and select SSL under Protocol.

As of January 10, 2025, all newly created Amazon Redshift clusters have the

require_sslparameter set totrueby default. If a connection attempt over TCP fails, check your cluster's configuration settings. Ifrequire_sslparameter іs set totrue, make sure to connect via SSL or changerequire_sslvalue tofalse.

-

SSL Mode — this mode determines the priority of using secure SSL connection. You can select any of the following modes:

-

Allow — try first a non-SSL connection, then if that fails, try an SSL connection.

-

Disable — establish only an unencrypted connection. If this mode is selected, SSL is not used, and other SSL parameters are not available. This mode is selected by default.

-

Prefer — try first an SSL connection, then if that fails, try a none-SSL connection.

-

Require — establish only a secure SSL connection.

-

-

SSL CA Cert — authority certificate. Click the button with three dots to open a multiline editor and paste the content of the certificate file there.

SSH Connections

To use an SSH connection, click Advanced Settings and select SSH under Protocol:

-

SSH Authentication Type — type of SSH authentication to use: Password or PublicKey. When using the password authentication, you need to specify the SSH password. For public key authentication, you need to specify the passphrase for the private key and the private key.

-

SSH Host — name or IP address of the SSH server.

-

SSH Port — TCP/IP port to connect to the SSH Server. By default, it is 22.

-

SSH User — user name on the machine where the SSH Server is running. It is a Windows user, not a user of the MySQL Server.

-

SSH Password — password of a user account on the SSH Server. It is available if Password SSH Authentication Type is selected.

-

SSH Passphrase — passphrase for a private key. You can set it while generating public and private key files through a key generator tool, for example PuTTygen. It is available if PublicKey SSH Authentication Type is selected.

-

SSH Private Key — private key. Click the button with three dots to open a multiline editor and paste the content paste the content of the key file there. It is available if PublicKey SSH Authentication Type is selected.

Other Advanced Parameters

-

Unicode — select this checkbox to use Unicode encoding for the connection.

-

Command Timeout — this parameter specifies the wait time (in seconds) before terminating an attempt to execute a command and generating an error. Note that it is the time to wait for any server reply since the command was sent to a server, and it doesn't include the time necessary to fetch data if the command selects some data.

-

Connection Timeout — this parameter determines the time (in seconds) to wait while trying to establish a connection before terminating the attempt and reporting an error. Generally you don't need to modify it, but in some specific cases when a connection to the database server is not good you may try increasing its value.

-

Use Bulk Import — this parameter affects import integrations with the INSERT, UPDATE and DELETE operations and with Amazon Redshift as a target.

By default, these integrations import data as follows: Skyvia writes data into multiple temporary CSV files, uploads them to Amazon S3, and then instructs Redshift to import the data from these files. These actions occur simultaneously to ensure optimal performance. Once the CSV files are imported, they are deleted. However, when data is imported this way, it is not possible to generate a per-record error log.

If you disable bulk import, Skyvia will use standard INSERT, UPDATE, and DELETE statements instead. This allows you to obtain a per-record error log but significantly reduces performance. Therefore, disabling bulk import is not recommended unless you:

-

Are importing a small number of records and require a per-record error log, or

-

Do not want to use Amazon S3 for temporary file storage.

-

Supported Actions and Actions Specifics

Amazon Redshift connector supports the following actions:

-

Execute Command in Source, Lookup, and Target Data Flow components and in Import and Export tasks in the Advanced mode.

-

Execute Query in Source Data Flow components and in Import and Export tasks in the Advanced mode.

-

Lookup in Lookup Data Flow components.

-

Insert in Target Data Flow components.

-

Update in Target Data Flow components.

-

Delete in Target Data Flow components.